Every few years, it is time to update my servers. While servers require regular bug fixes, every few years, it is time to clean out the cruft. Just as people move because of changes in conditions and for new opportunities, the neighborhood that is the internet has become a noisier dirtier place.

Why Your Server Needs a New Neighborhood

The world changes so much in between major upgrades. Now we have AI bots scraping the internet, hammering websites and using up resources, requiring new solutions. We didn’t need a heavy deadbolt to keep the bots out, a simple lock would do. Moving isn’t just about a new view; it’s about surviving the new climate of the web.

I could just fix things that are worn, sometimes you just need complete replacement. Even with the same specs, this ensures a clean start, and a better server.

When it comes to home servers, moves usually happen when I am upgrading the hardware. But when it comes to my VPSes, which are hosted online, I tend to build a new server, migrate to it, and then shut down the old one. It also allows me to reexamine and refresh myself on things that may have developed in the interim. Review new software options, new features, configuration settings.

So, how do you go about this.

Packing Your Digital Boxes: The Audit Before the Move

- Inventory the services you have. Docker containers, databases, servers. It is not just the services, but what they are used for.

- Toss out the junk you don’t need anymore…old software that isn’t active, cron jobs that don’t need to run.

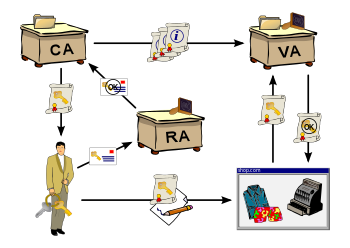

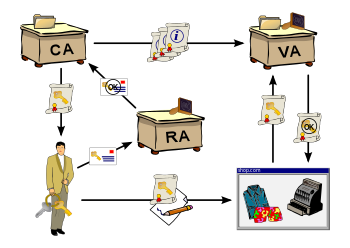

Security and Monitoring: The Modern Dilemma of Convenience vs. Control

In the modern world, security gets harder and harder for the average person. There is a reason why people are turning to companies like Cloudflare to handle it for them. Cloudflare does offer a generous free plan, but it adds a dependency not under your control, and a free plan can so easily turn into a paid trap. Even though my firewall has held for many years, it lacks more modern tools to help me manage it, as I still do everything in a text window. For the same reason that people use Cloudflare, I don’t always have the time to get down into the code, and need the ability to do quick monitoring on the go.

When a Total Rebuild is the Only Cure

This is a great time to review new operating systems, new servers, and if sticking with the same, new configurations. If you never rebuild anything, you also forget how your system works.

In the end, you will have a brand new server, ready to face new challenges and you’ll be set for a few years. And as I work on this, I will be commenting on some of those tools.

In a continuing effort to get the best combination of services and pricing, I often review my choice of provider. While it is a pain to migrate services, things do change over time.

In a continuing effort to get the best combination of services and pricing, I often review my choice of provider. While it is a pain to migrate services, things do change over time.